PBR介绍

PBR可以让渲染的精度更高,使画面更加好看。有些简单的游戏中的颜色RGB,因为精度问题,一般都是8位,而PBR则是升级到了32位,从而使精度更高,这样就不用考虑因为精度问题导致曝光出了问题。

而这类渲染方式统称为HDR渲染。实现HDR要先创建一个FBO,他的数据精度会比普通的要高,还要做一个ToneMapping,用来将其转换为SDR(以后再补)

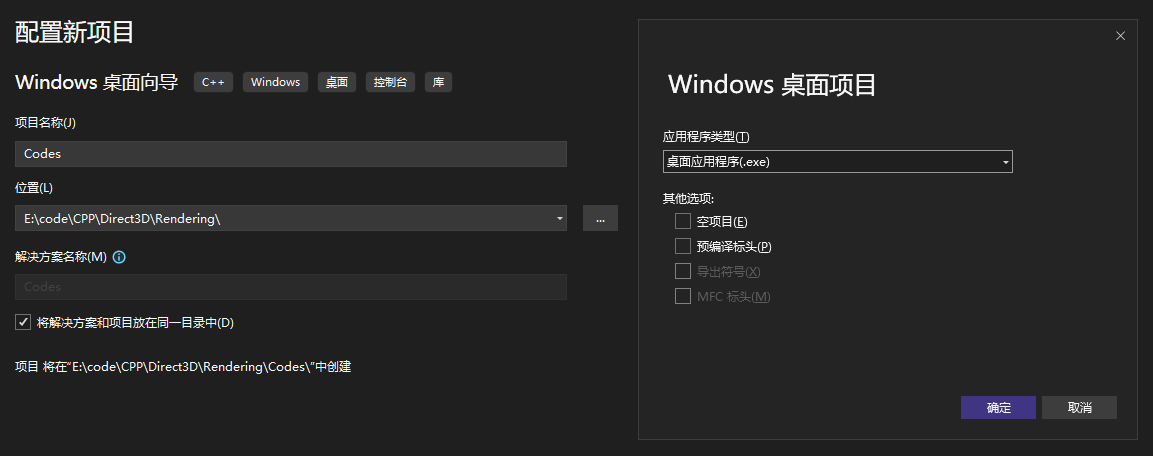

创建项目

清空项目中不需要的附加文件(只保留Codes.sln、Codes.vcxproj、Codes.vcxproj.filtes、Codes.vcxproj.user),导入FrameWork后(本课程只考虑PBR的实现,FrameWork后续在对其进行研究),即可开始,随后配置基本的渲染环境:

Scene.h

#pragma once

void InitScene(int intWidth, int inHeight);

void RenderOneFrame(float inDeltaTime);Scene.cpp

#include "Scene.h"

#include "BattleFireDirect3D12.h"

void InitScene(int inWidth, int inHeight)

{

}

void RenderOneFrame(float inDeltaTime)

{

RHICommandList rhiCommandList;

FrameBufferRT* rt = BeginRenderFrame(rhiCommandList.mCommandList);

EndRenderFrame(rhiCommandList.mCommandList);

} 代码拆解:

InitScene(int inWidth, int inHeight):是一个初始化占位符。在 DirectX 开发中,在这里创建与窗口大小相关的资源。

RenderOneFrame(float inDeltaTime):是渲染的核心逻辑,每一帧都会被调用(例如每秒 60 次)。

RHICommandList:对ID3D12GraphicsCommandList的封装。构造函数会自动调用 GetCommandList()内部执行了 Reset),而析构函数则自动调用 EndCommandList(1)(内部执行了 Close 和 Execute)。原生的ID3D12GraphicsCommandList每一帧都必须要先Reset才能开始录制,必须先CLose才能提交,提交后必须处理同步。

struct RHICommandList {

ID3D12GraphicsCommandList* mCommandList;

RHICommandList();

~RHICommandList();

ID3D12GraphicsCommandList* operator->() {

return mCommandList;

}

};

RHICommandList::RHICommandList() {

mCommandList = GetCommandList();

}

RHICommandList::~RHICommandList() {

EndCommandList(1);

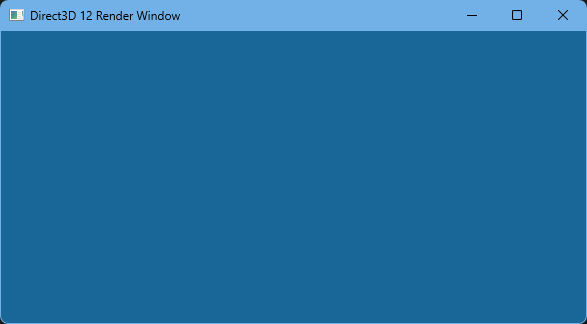

}运行后结果:

搭建HDR渲染管线

在显示器(LDR,低动态范围)的世界里,颜色值只能是 $0.0$(纯黑)到 $1.0$(纯白)。

在物理世界中,光照强度是没有上限的(太阳的亮度远高于白纸)。传统的 8 位颜色空间(0-255)无法表现这种跨度。因此,我们需要一个“高动态范围”的缓冲区来存储计算结果,最后再通过 Tone Mapping(色调映射) 压缩回显示器能显示的范围。

HDR 渲染管线必须具备的两个要素:

高精度缓冲区(Floating Point Buffer): 能够存储大于 $1.0$ 数值的“容器”。

色调映射(Tone Mapping): 在最后一步,把这些巨大的数值智能地压缩回 $[0.0, 1.0]$,让显示器能正常显示。

Scene.cpp

#include "Scene.h"

#include "BattleFireDirect3D12.h"

#include "FrameBuffer.h"

FrameBuffer* gHDRFBO = nullptr;

void InitScene(int inWidth, int inHeight)

{

gHDRFBO = new FrameBuffer;

gHDRFBO->SetSize(inWidth, inHeight);

gHDRFBO->AttachColorBuffer(DXGI_FORMAT_R32G32B32A32_FLOAT);

gHDRFBO->AttachDepthBuffer();

}

void RenderOneFrame(float inDeltaTime)

{

//ldr

RHICommandList rhiCommandList;

//draw skybox

//draw material sphere

FrameBufferRT* rt = gHDRFBO->BeginRendering(rhiCommandList.mCommandList);

delete rt;

rt = BeginRenderFrame(rhiCommandList.mCommandList);

//tone mapping, hdr -> swapchain

EndRenderFrame(rhiCommandList.mCommandList);

delete rt;

} 代码拆解:

在InitScene中新建了一个HDR的FBO(帧缓冲对象),相当于一个离线的画布,我们将在FBO中做好所有的设置之后再将其渲染。绑定画布的尺寸、在显存中开辟一块空间并且使用R32G32B32A32_FLOAT,并且给予其深度支持。

FrameBufferRT* rt = gHDRFBO->BeginRendering(rhiCommandList.mCommandList);:将画布绑定在当前的HDR上。

struct FrameBufferRT {

Texture mColorBuffer;

Texture mDSBuffer;

};该结构体把当前那一帧正在被使用的资源(Resource)及其对应的描述符(RTV/DSV)打包在一起。这样,只需要通过这个 rt 指针,就能立刻获取到 GPU 绘图所需要的东西。

本部分源码量过多,直接放在程序中进行注释讲解!

FrameBufferRT* FrameBuffer::BeginRendering(ID3D12GraphicsCommandList* inCommandList/* =nullptr */) {

// GPU是高度并行的,在上一帧,HDR 纹理可能作为只读贴图被采样。现在要往里写东西,必须告诉其暂停,并且把这块内存从读状态切换到写状态。

D3D12_RESOURCE_BARRIER berrierCRT = InitResourceBarrier(mColorBuffer, D3D12_RESOURCE_STATE_GENERIC_READ, D3D12_RESOURCE_STATE_RENDER_TARGET);

inCommandList->ResourceBarrier(1, &berrierCRT);

D3D12_RESOURCE_BARRIER berrierDSRT = InitResourceBarrier(mDSBuffer, D3D12_RESOURCE_STATE_GENERIC_READ, D3D12_RESOURCE_STATE_DEPTH_WRITE);

inCommandList->ResourceBarrier(1, &berrierDSRT);

FrameBufferRT* frameBufferRT = new FrameBufferRT;

frameBufferRT->mColorBuffer.mDescriptorHeap = mRTVDescriptorHeap;

frameBufferRT->mColorBuffer.mFormat = mFormat;

frameBufferRT->mColorBuffer.mResource = mColorBuffer;

frameBufferRT->mColorBuffer.mRTV = mRTVDescriptorHeap->GetCPUDescriptorHandleForHeapStart();

frameBufferRT->mDSBuffer.mDescriptorHeap = mDSVDescriptorHeap;

frameBufferRT->mDSBuffer.mFormat = DXGI_FORMAT_R24G8_TYPELESS;

frameBufferRT->mDSBuffer.mResource = mDSBuffer;

frameBufferRT->mDSBuffer.mRTV = mDSVDescriptorHeap->GetCPUDescriptorHandleForHeapStart();

// OM,这是渲染管线的最后一步,进行测试混合之类的

inCommandList->OMSetRenderTargets(1, &frameBufferRT->mColorBuffer.mRTV, FALSE, &frameBufferRT->mDSBuffer.mRTV);

// 定义一套缩放比例,用于投影渲染,以及裁剪等

D3D12_VIEWPORT viewport = { 0.0f,0.0f,float(mWidth),float(mHeight),0.0f,1.0f };

D3D12_RECT scissorRect = { 0,0,mWidth,mHeight };

inCommandList->RSSetViewports(1, &viewport);

inCommandList->RSSetScissorRects(1, &scissorRect);

// 清理显存

const float hdrClearColor[] = { 0.0f, 0.0f, 0.0f, 0.0f };

inCommandList->ClearRenderTargetView(frameBufferRT->mColorBuffer.mRTV, hdrClearColor, 0, nullptr);

inCommandList->ClearDepthStencilView(frameBufferRT->mDSBuffer.mRTV, D3D12_CLEAR_FLAG_DEPTH | D3D12_CLEAR_FLAG_STENCIL, 1.0f, 0, 0, nullptr);

return frameBufferRT;

}rt = BeginRenderFrame(rhiCommandList.mCommandList);:HDR渲染管线的最终出口的准备,用来在电脑屏幕(LDR交换链)进行绘画。

本部分源码量过多,直接放在程序中进行注释讲解!

FrameBufferRT* BeginRenderFrame(ID3D12GraphicsCommandList* inCommandList) {

// 切换状态:把屏幕缓冲(sRenderTargets)从“显示状态”变成“可写状态”

D3D12_RESOURCE_BARRIER barrier0 = InitResourceBarrier(sRenderTargets[sCurrentFrameIndex], D3D12_RESOURCE_STATE_PRESENT, D3D12_RESOURCE_STATE_RENDER_TARGET);

inCommandList->ResourceBarrier(1, &barrier0);

FrameBufferRT* frameBuffer = new FrameBufferRT;

frameBuffer->mColorBuffer.mDescriptorHeap = sRTVDescriptorHeap;

// 算地址:屏幕往往有2张图(前台/后台),根据 sCurrentFrameIndex 算出此时该画哪一张。这就是为什么它有一行复杂的加法偏移计算,而 HDR FBO 不需要

frameBuffer->mColorBuffer.mRTV.ptr = frameBuffer->mColorBuffer.mDescriptorHeap->GetCPUDescriptorHandleForHeapStart().ptr + sCurrentFrameIndex * GetDirect3DDevice()->GetDescriptorHandleIncrementSize(D3D12_DESCRIPTOR_HEAP_TYPE_RTV);

// 定格式:这里的格式是 sColorRTFormat (通常是 R8G8B8A8),也就是普通屏幕格式

frameBuffer->mColorBuffer.mFormat = sColorRTFormat;

frameBuffer->mDSBuffer.mDescriptorHeap = sDSDescriptorHeap;

frameBuffer->mDSBuffer.mRTV = frameBuffer->mDSBuffer.mDescriptorHeap->GetCPUDescriptorHandleForHeapStart();

frameBuffer->mDSBuffer.mFormat = sDSRTFormat;

// 挂载:告诉 GPU,现在的输出口是【屏幕】

inCommandList->OMSetRenderTargets(1, &frameBuffer->mColorBuffer.mRTV, FALSE, &frameBuffer->mDSBuffer.mRTV);

D3D12_VIEWPORT viewport = { 0.0f,0.0f,float(sViewportWidth),float(sViewportHeight),0.0f,1.0f };

D3D12_RECT scissorRect = { 0,0,sViewportWidth,sViewportHeight };

inCommandList->RSSetViewports(1, &viewport);

inCommandList->RSSetScissorRects(1, &scissorRect);

const float clearColor[] = { 0.1f, 0.4f, 0.6f, 1.0f };

// 刷色:这里把屏幕刷成了“天蓝色” { 0.1f, 0.4f, 0.6f, 1.0f }

inCommandList->ClearRenderTargetView(frameBuffer->mColorBuffer.mRTV, clearColor, 0, nullptr);

inCommandList->ClearDepthStencilView(frameBuffer->mDSBuffer.mRTV, D3D12_CLEAR_FLAG_DEPTH | D3D12_CLEAR_FLAG_STENCIL, 1.0f, 0, 0, nullptr);

return frameBuffer;

}EndRenderFrame(rhiCommandList.mCommandList);:同一块显存在不同的时刻不一样,渲染时:它的状态必须是 RENDER_TARGET(渲染目标),显示时:它的状态必须是 PRESENT(展示)。这行代码本质上是在给 GPU 发送一条同步指令,告诉GPU针对这张图的写操作必须在这里全部结束,如果没有该指令就可能导致显示器只拿到一部分像素,从而产生画面撕裂。

void EndRenderFrame(ID3D12GraphicsCommandList* inCmdList) {

D3D12_RESOURCE_BARRIER frameEndBerrier = InitResourceBarrier(sRenderTargets[sCurrentFrameIndex], D3D12_RESOURCE_STATE_RENDER_TARGET, D3D12_RESOURCE_STATE_PRESENT);

inCmdList->ResourceBarrier(1, &frameEndBerrier);

}PBR算法框架搭建

本部分搭建一个用于编写PBR的基本算法框架,并没有写具体的渲染公式,只是框架。

创建两个hlsl文件(一个也可以,主要看怎么去写)

pbr_vs.hlsl:顶点着色器(Vertex Shader - 几何阶段)

struct VertexData{

float4 position:POSITION;

float4 texcoord:TEXCOORD0;

float4 normal:NORMAL;

};

struct VertexOutput{

float4 position:SV_POSITION;

float4 texcoord:TEXCOORD0;

float4 normal:NORMAL;

float4 positionWS:TEXCOORD1;

};

cbuffer X:register(b0){

float4x4 ProjectionMatrix;

float4x4 ViewMatrix;

float4x4 ModelMatrix;

float4x4 ITModelMatrix;

float4x4 Reserverd[1020];

};

VertexOutput main(VertexData inVertexData){

VertexOutput o;

float4 positionWS=mul(ModelMatrix, float4(inVertexData.position.xyz,1.0f));

float4 positionVS=mul(ViewMatrix,positionWS);

float4 positionCS=mul(ProjectionMatrix,positionVS);

float3 normalWS=mul(ITModelMatrix,float4(inVertexData.normal.xyz,0.0f));

o.position=positionCS;

o.texcoord=inVertexData.texcoord;

o.normal=float4(normalWS,0.0f);

o.positionWS=positionWS;

return o;

}VertexData:分别是原始坐标(模型空间),纹理坐标(UV),原始法线(模型空间)。VertexOutput:顶点着色器VS和像素着色器PS的通讯,定义了GPU处理完几何体之后哪些数据需要被处理为片元着色。这里的参数分别是裁剪空间坐标,纹理坐标UV,世界空间法线,世界空间坐标。cbuffer X:常量缓冲区,对应参数分别为模型矩阵,观察矩阵,投影矩阵,法线变换矩阵,预留填充。main:核心逻辑。

pbr_ps.hlsl:片元/像素着色器(Pixel Shader - 光栅化阶段)

struct PSInput{

float4 position:SV_POSITION;

float4 texcoord:TEXCOORD0;

float4 normal:NORMAL;

float4 positionWS:TEXCOORD1;

};

float4 main(PSInput inPSInput):SV_TARGET{

float3 finalColor=float3(0.0f,0.0f,0.0f);

float3 ambientColor=float3(0.0f,0.0f,0.0f);

float3 diffuseColor=float3(0.0f,0.0f,0.0f);

float3 specularColor=float3(0.0f,0.0f,0.0f);

finalColor=ambientColor+diffuseColor+specularColor;

return float4(finalColor,1.0f);

}基本的环境光,漫反射和镜面高光。

编写材质球有关的代码搭建

更新后的Scene.cpp如下:

#include "Scene.h"

#include "BattleFireDirect3D12.h"

#include "FrameBuffer.h"

#include "Node.h"

#include "Camera.h"

#include "Material.h"

FrameBuffer* gHDRFBO = nullptr;

Node* gNode = nullptr;

DirectX::XMMATRIX gProjectionMatrix;

Camera gMainCamera;

void InitSphere(ID3D12GraphicsCommandList* inCommandList) {

gNode = new Node;

StaticMeshComponent* staticMesh = new StaticMeshComponent;

staticMesh->InitFromFile(inCommandList, "Res/Model/Sphere.staticmesh");

gNode->mStaticMeshComponent = staticMesh;

Material* material = new Material(L"Res/pbr_vs.hlsl", L"Res/pbr_ps.hlsl");

material->SetCullMode(D3D12_CULL_MODE_FRONT);

gNode->mStaticMeshComponent = staticMesh;

gNode->mStaticMeshComponent->mMaterial = material;

}

void InitScene(int inWidth, int inHeight)

{

gProjectionMatrix = DirectX::XMMatrixPerspectiveFovLH(

(45.0f * 3.14f) / 180.0f, float(inWidth) / float(inHeight),

0.1f, 1000.0f

);

gMainCamera.Init(DirectX::XMVectorSet(0.0f, 0.0f, 0.0f, 1.0f),

5.0f, DirectX::XMVectorSet(0.0f,-0.2f,1.0f,0.0f));

gHDRFBO = new FrameBuffer;

gHDRFBO->SetSize(inWidth, inHeight);

gHDRFBO->AttachColorBuffer(DXGI_FORMAT_R32G32B32A32_FLOAT);

gHDRFBO->AttachDepthBuffer();

RHICommandList rhiCommandList;

InitSphere(rhiCommandList.mCommandList);

}

void RenderOneFrame(float inDeltaTime)

{

//ldr

RHICommandList rhiCommandList;

//draw skybox

//draw material sphere

FrameBufferRT* rt = gHDRFBO->BeginRendering(rhiCommandList.mCommandList);

gNode->Draw(rhiCommandList.mCommandList, gProjectionMatrix, gMainCamera, rt->mColorBuffer.mFormat, rt->mDSBuffer.mFormat);

gHDRFBO->EndRendering(rhiCommandList.mCommandList);

delete rt;

rt = BeginRenderFrame(rhiCommandList.mCommandList);

//tone mapping, hdr -> swapchain

EndRenderFrame(rhiCommandList.mCommandList);

delete rt;

}关于调试

在项目属性,生成后事件中编写以下内容:

editbin/subsystem:console $(OutDir)$(ProjectName).exe下面再补充